CDS is Dead. Long Live CDS.

On 15 Nov 2025 by Mike StandardAI has made clinical decision support (CDS), the great dream of my line of work, obsolete. We should invest our time clearing out its detritus to make way for the robots.

CDS – The Once Great Dream

Codified into informatics parlance in 2001 but drawing on concepts from long before medicine was computerized, CDS was the elusive art of using an electronic health record (EHR) to spot something its human users missed, alerting them to it and, thus, saving the day. Though a noble goal, in that were sewn the seeds for its own destruction.

For what many practitioners, particularly those working at some very large EHR vendors, forgot was…the humans. What we now take for granted as human-in-the-loop.

Some ambitious summer intern at Epic would decide to have a go at CDS. They’d find the shiniest clinical practice guidelines from the American Academy of Whatever. They’d use EHR data to identify patients covered by the guideline and – wham-o! – a big, red, bold alert pops up in the doctor’s face to remind them of those august guidelines.

This runs into two huge problems with humans-in-the-loop. First, every guideline has its quirks, limitations and exceptions. If our intern was not extremely cautious in their design and/or the EHR data are not extremely comprehensive, there’s a good chance the guideline does not actually apply to this patient. Second, especially by picking the shiniest guidelines, there’s a great chance the doctor knows all this. Best case, the big, red, bold alert interrupts the doctor to remind them to do the thing they were trying to do before being interrupted. Worst case, the alert is wrong and frustrates the doctor while eroding trust in the system thereby forcing alerts to be ever bigger, redder and bolder to draw attention above the noise.

In the ancient epoch B.A.I. (before AI), primitive CDS could be useful in niche circumstances of rare, unexpected conditions. Alerting an ER doc their patient has asthma or hypertension is an annoying waste. Alerts about one-in-a-million diseases with important implications for ER care could be useful! By definition, no one is expecting such cases.

Traditional CDS Has Had a Niche

Trouble is, also by definition, crafting such alerts requires a lot of labor for little return. In the dominant legacy EHRs of our day, CDS alerts are a boutique industry requiring all the expertise and fine craftsmanship of a hand-stitched Italian leather purse but with way worse profit margins. In a medium-sized health system, a well constructed rare disease CDS alert might fire once every few years. OMIM catalogs somewhere around 8,000 rare diseases. Thus, that health system needs to hand-stitch thousands of CDS leather bags to get anything more than scattershot coverage.

It’d be cool if Epic could take on the leather stitching to take advantage of the economy of scale. Lump every health system on Epic together, and rare diseases come up frequently. And indeed, Epic tries to do exactly this. Trouble is every health system needs a slightly different leather bag. Lab tests, documentation standards, workflows and much else vary across systems. So getting a pre-fab bag from Epic still requires lots of handstitching at the health system level, thus ruining the potential economy of scale. To boot, in my experience, Epic can be quite sloppy with their stitching. We’ve had to correct glaring flaws in CDS alerts shipped from Epic.

Stop Worrying and Love AI

Years ago, I agreed that traditional CDS was a beautiful goal. Now, I invest far more time uprooting old, poorly designed alerts than handstitching new ones. Better to clear the way for AI – a far more efficient and scalable solution.

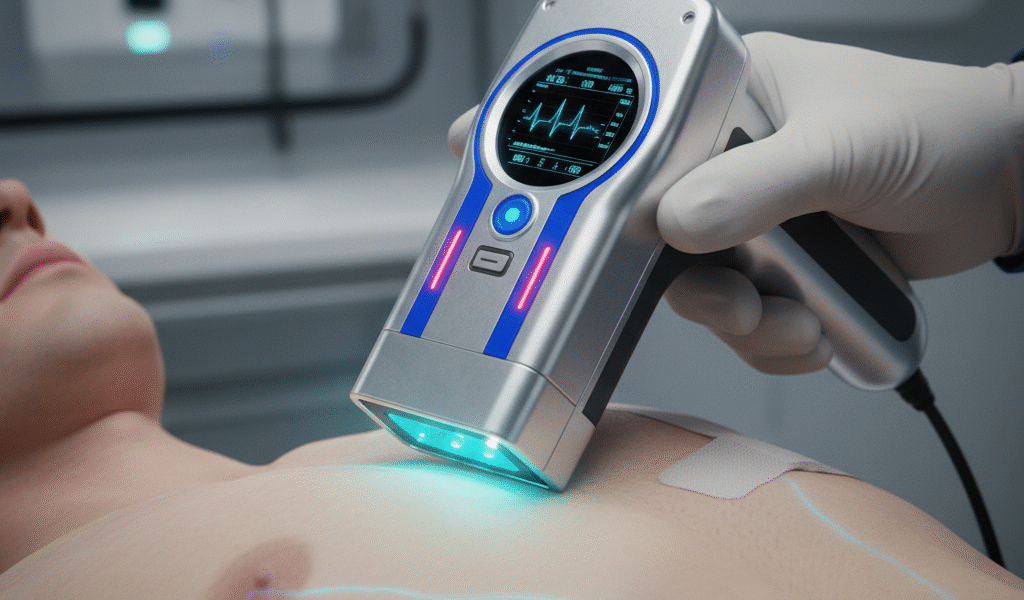

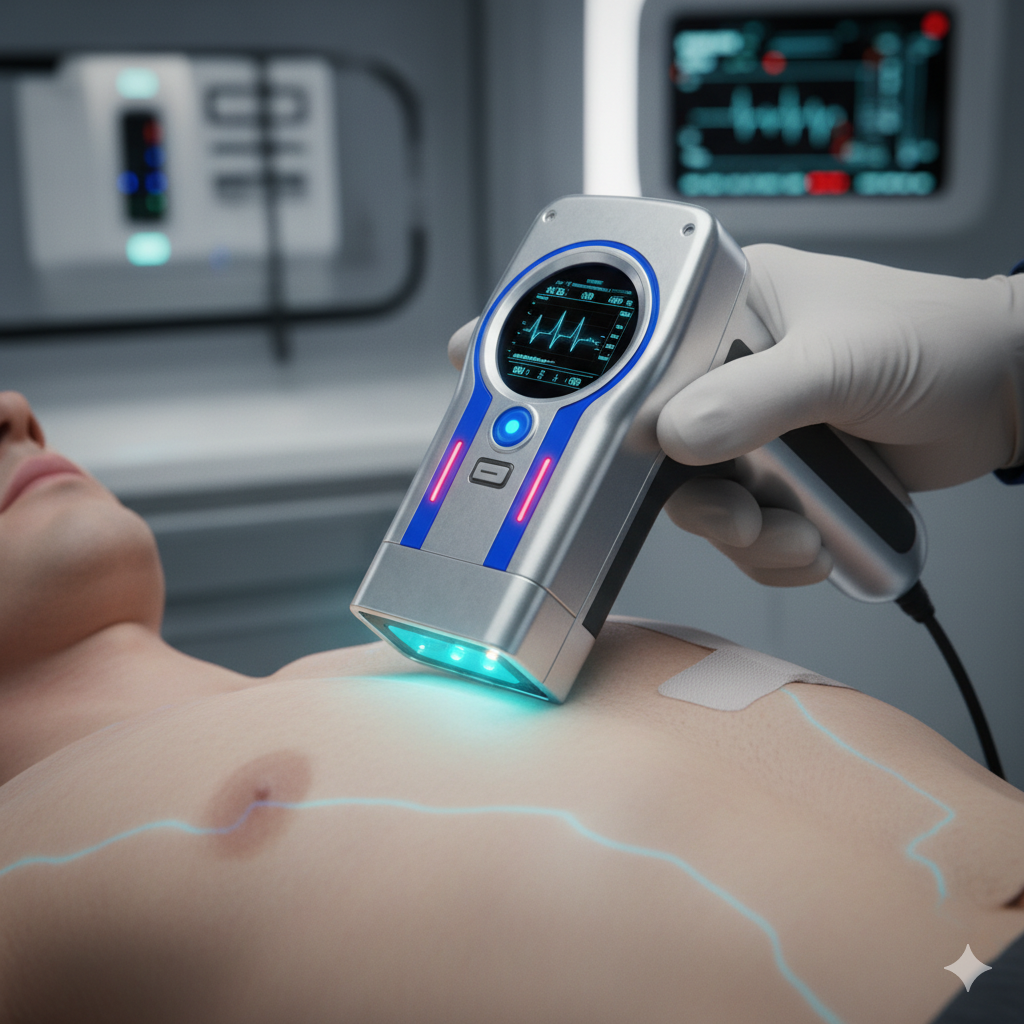

Even generalist AI large language models (LLMs) have effectively memorized the public internet, including OMIM, PubMed and its ilk. This makes them pretty darn good at spotting diseases, common or rare. Hence their perfect scores on medical exams. Insteading of handcrafting CDS one rare disease at a time, we can easily feed a patient’s entire chart into such models at every encounter. Data exchange networks like Care Quality and Commonwell mean it really can be the entire chart, not just our hospital. It’s the leap from hand-stitched Italian leather for one condition to the universal Star Trek magic tricorder.

I, for one, am investing as little as possible in traditional CDS, as much as possible in the data plumbing to connect secure AI to our charts.

CDS is dead. Long live CDS.

Aside: The Miseducation of ROC

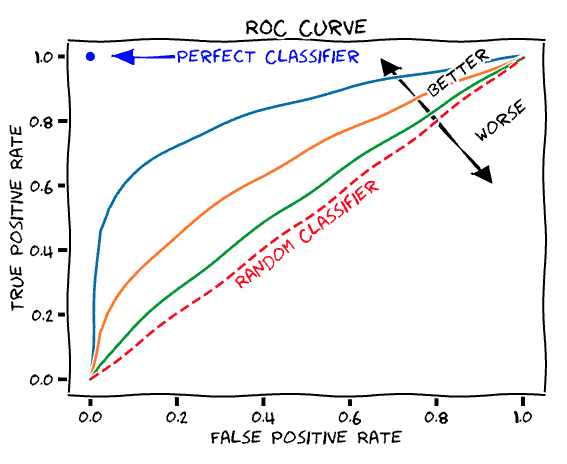

A version of the same statistic problems that bedevil traditional CDS is, for similar reasons, pervasive when researchers publish the algorithms that tend to underly CDS. The standard research metric is the area under the curve (AUC) of a receiver operator curve (ROC). ROCs were developed to test early radar systems and are indeed a good way to describe a test’s signal to noise properties. The AUC is 0.5 if the radar is useless – its signal is no better than chance at spotting a plane amid its noise – and 1.0 if it’s perfect. Researchers, myself included, publish papers with AUCs 0.7-0.9 and discuss how great they are. That’s true, but it doesn’t make it useful for CDS. For common conditions, your highly trained human doctor probably has an AUC of 0.99. Unless you’re designing for very low resource settings, any CDS tool under that lofty bar is probably useless.

You may also like

Archives

Calendar

| M | T | W | T | F | S | S |

|---|---|---|---|---|---|---|

| 1 | ||||||

| 2 | 3 | 4 | 5 | 6 | 7 | 8 |

| 9 | 10 | 11 | 12 | 13 | 14 | 15 |

| 16 | 17 | 18 | 19 | 20 | 21 | 22 |

| 23 | 24 | 25 | 26 | 27 | 28 | 29 |

| 30 | 31 | |||||